Capacity Management, Partners, Performance Engineering

Is Capacity Optimization relevant in the era of Kubernetes?

- By Andrea Gallo

27 Dec

With the growth of automated, scalable frameworks, such as Kubernetes, hosted on flexible (Hybrid) Cloud or PaaS infrastructures, many IT organizations have concluded that capacity planning as we know it has completed its mission. More and more practitioners are becoming convinced that applications based on automatically scalable infrastructure architectures do not require particular attention for performance and capacity planning.

Do you believe cloud solutions (AWS, Azure, GCP) and container engines (Kubernetes, OpenShift) are the holy grail solutions that can finally give you absolute confidence that your applications will never run out resources or grind to a halt? You may want to tag along and check these commonly held assumptions about living in the Cloud.

Budget, Space and Time: A Brief History of Capacity Optimization

On-premises infrastructure capacity optimization was designed to balance business performance against budget, physical space, and turnaround time constraints.

As companies were growing their on-premises infrastructure, capacity optimization became fundamental. In planning for future on-premises needs, organizations were dealing with:

- Budget: How much money should be spent on running and maintaining the IT infrastructure.

- Space: How much physical space was available in my data center to plug in new appliances and devices.

- Time: How quickly new hardware could be provisioned to meet the incoming business demand. The on-premises procurement process could take a long elapsed time, sometimes months.

As the businesses grew, companies needed to adapt and determine which of the three constraints would have most limited their growth, transforming this trade-off into a business decision. Implementing a new initiative, anticipating a surge in transaction volumes and digital usage, and planning for a hardware refresh were all activities insisting on the same resources and coordinated centrally to manage risk and resources.

Over the years, companies created models to forecast accurately and plan the organization’s compute, network, and storage needs. Solutions were designed to centralize this effort instead of manually updating thousands of spreadsheets. In 2000 Neptuny (now Moviri) created Caplan Capacity Optimization precisely with this goal in mind, and in 2010, sold the capacity management product to BMC Software. The solution became the de facto market leader, evolving into what we know today as the BMC Helix Optimize solution.

The advent of virtualization 15 years ago led some to think that capacity management was on its deathbed. History proved them wrong, as virtualization technologies introduced new capacity challenges that subject matter experts had to address with data-driven approaches.

With the recent growth of automated, scalable frameworks, such as Kubernetes, hosted on flexible (Hybrid) Cloud or PaaS infrastructures, companies and analysts once again have come to expect the end of capacity planning.

Capacity management is responsible for ensuring that adequate capacity is available at all times to meet the agreed needs of the business in a cost-effective manner.”

Dennis Newberry, Executive Director, Product Management at BMC

Conventional Wisdom Can Be Dangerous

If your work involves managing cloud infrastructure and architectures, you may have heard claims of capacity planning’s imminent demise.

“Our cloud vendors will take care of automatically scaling our applications, ensuring a reliable user experience.”

In some cases, that statement approximates reality. Yet, delegating a vendor to scale the infrastructure independently will most likely result in unplanned expenditure, adding your company to the 70% of companies worldwide that had to delay or prolong the cloud migration due to budgeting concerns (still better than the remaining 20% that abandoned the migration altogether). Responsible cloud management must always keep an eye on availability and on occurred and planned costs.

“Application developers are the ones responsible for correctly sizing resource allocation for the application container.”

Container engines rely heavily on resource allocation to determine whether to schedule a container and where. Configuring a container with misleading information can lead the Kubernetes engine to not schedule a workload because of insufficient resources (when overprovisioning capacity) or unnecessarily kill the execution (when under-provisioning). Properly sizing containers is critical, particularly in an auto-provisioned environment. Capacity and performance engineers must oversee the process to avoid the “first come, first serve” approach, where teams start asking for unfounded resources only to avoid needing more in the future.

“Moving to the cloud will remove every technical bottleneck.”

Bottlenecks can’t be entirely removed; instead, they are shifted. When a bottleneck is identified, cloud technologies have technical limitations too: monitoring and modeling their usage will avoid failing automated horizontal scalability, limiting service reliability. These limits can be technical limits (e.g., number of Instances in a region, number of reserved IP) or process limits (e.g., assigned budget). An experienced Head of Infrastructure and Cloud Operations recognizes and knows these technical limitations, as they make the difference between a successful cloud implementation versus an abandoned initiative.

Capacity Optimization for the Cloud

Capacity Optimization is relevant in the cloud era precisely because the cloud removes the friction at the foundation of budget controls. Accurately describing the recent past, defining the current reality, and forecasting the future, especially with constrained budget resources is of paramount importance.

Indeed, cloud and container technologies do not magically resolve or eliminate the performance question that created the need for capacity optimization in the first place. They simplify (time, space) constraints typical of on-premises infrastructure while introducing non-trivial difficulty in managing the budget.

Cloud infrastructure capacity optimization is now focused on budget and budget management. Therefore, we believe that capacity and cloud cost management should be a top priority for today’s CIOs.

How We Do It: Business-Aware Capacity Planning

As performance and capacity experts, our goal is to ensure that our customers are running the most efficient IT infrastructure possible to support the business. Our mantra is clear: analyze the past, manage the present, plan for the future.

First and foremost, the IT cost and resource optimization process needs to be nurtured and supported by company-wide initiatives. It must be:

- Systematic: It must thoroughly access the current infrastructure’s status and past trends, discover risks, identify improvement opportunities, and reclaim resources.

- Enterprise-wide: Inefficiencies can happen at every level of the IT stack and across the organization. Reclaiming resources requires the involvement of a wide range of teams and stakeholders.

- Automated: In an always-changing world with cloud instances spawned continuously on-the-fly and containerized applications, it is not humanly possible to manually collect all the data you need. Ad-hoc tasks need to be scheduled to collect, reconcile, and present information to decision-makers.

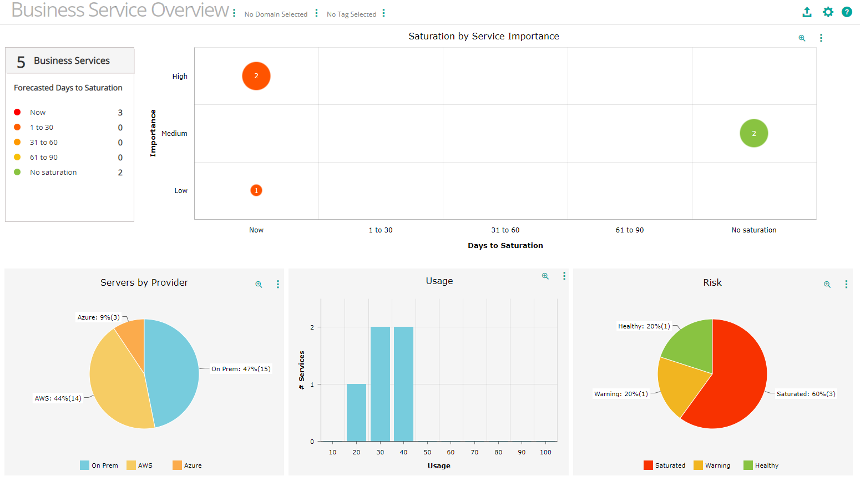

Still, a complete visibility of the IT infrastructure is effectively useless without adding the service context. Hosts and applications are deeply interconnected. It will not be easy to decommission them, even leveraging the most accurate algorithms, if we cannot reliably map the business implications of our decisions.

Optimizing IT costs and resources requires a solution to collect capacity and performance-relevant metrics and application and service contexts, providing a complete map of the environment.

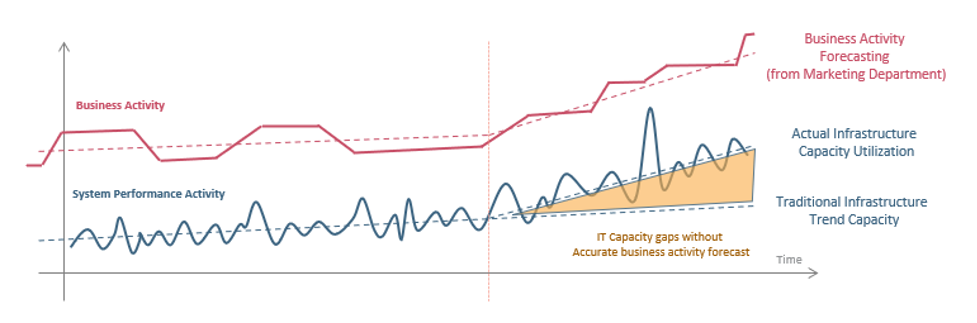

While working to optimize the infrastructure, it is essential to start planning for the future. Forecasting costs and resource utilization in a mature capacity management process means analyzing the effects and searching for the causes. Specifically, it correlates cost and utilization drivers to actual business demand, e.g., number of users, processed orders, visits, or employee growth.

This task is not easy, but the outcomes are invaluable: without a proper Business-Aware Capacity Planning process, we would either overspend (leading to unnecessary costs) or not spend enough (leading to lost business, poor user-experience and increase expenses for emergency hardware provisioning).

The capacity planning team must work closely with application and service owners that have the insights to predict (or that are even responsible for) any change in system workloads. Marketing campaigns, product launches, and new client onboarding are events that impact cloud capacity needs and must be planned for.

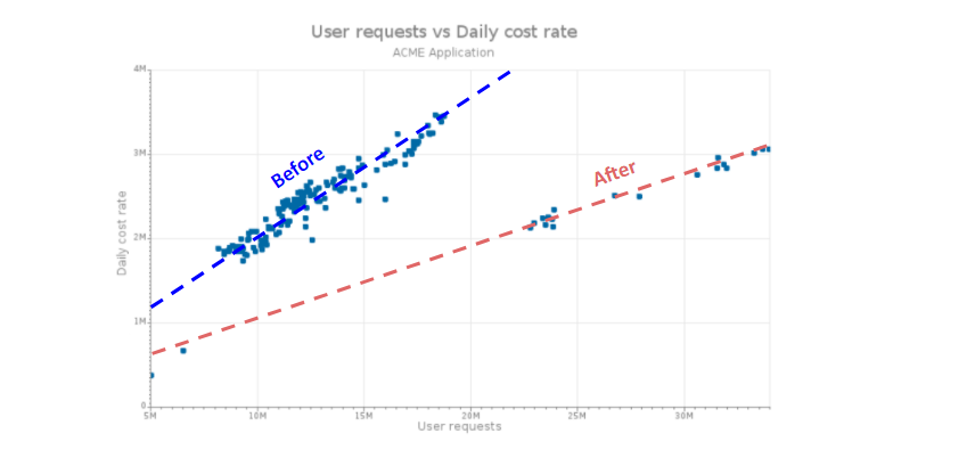

Correlation models used for planning can also evaluate a new application release’s efficiency and provide feedback to developers. If, after an upgrade, an application requires additional nodes to scale correctly, the capacity planning team will have to evaluate the impact on the planned budget.”

Andrea Gallo, Head of US Operations, Moviri

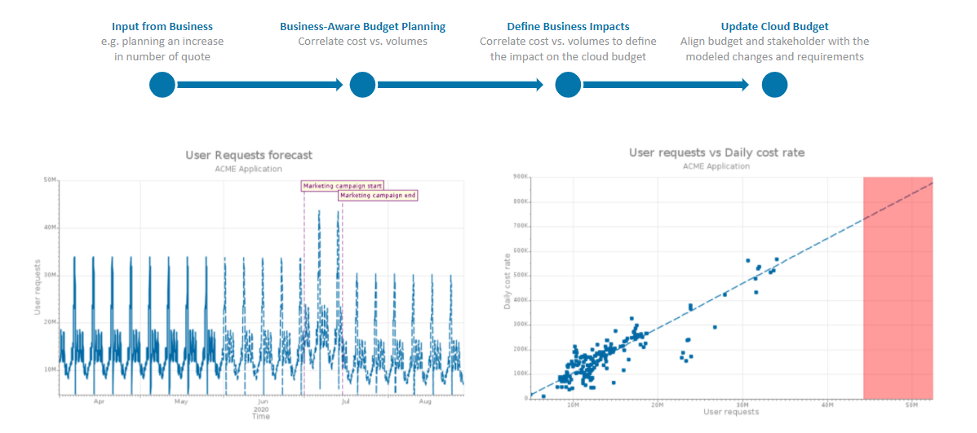

Accurate models help with planning, also unlocking other benefits by better understanding usage patterns.

In the example below, services were used ‘before’ in a ‘sub-optimal state’. The after trend suggests we satisfy more user requests at a lower daily cost rate. Meaning we can keep these changes and our budget.

In sum, with input from stakeholders and business processes, the capacity optimization team can deploy its modeling and forecasting tooling to:

- Update the Business-Aware Capacity Planning model, correlating cost and resource utilization with volumes

- Define the business impact of the received input, including them in the Capacity Planning model

- Update the cloud budget, aligning budget and stakeholder with the changes observed in the model.

Why Work with Us

Capacity optimization Is still very relevant in the cloud era. It can play a pivotal role in ensuring the proper budgeting for cloud-native technologies and automated, scalable containerization frameworks. These technologies are moving too fast, and manually managing this process has become untenable.

The complexity raised by an always changing environment requires experts in practices, tools, and technologies to achieve the expected results. Over the past 20+ years, Moviri has played a pivotal role in the most technology-intensive corporations to automate and streamline their capacity optimization processes, and it continues to do that as they are embracing the Cloud.

We believe that a successful capacity optimization process starts with a holistic, enterprise-wide, and future-proof solution able to support not only legacy and current technologies but also the ones that will drive tomorrow’s IT infrastructure. We continue to believe that the BMC Helix Optimize solution is the best in the market to achieve this goal.

We are here to help your organization. In the meantime, to start your journey by experiencing first-hand the BMC Helix Capacity Optimization solution, you can request a free trial here.

30-Day Free Trial

BMC Helix Optimize

Get ahead of changing business demands:

- Predict budget over-runs and resource bottlenecks

- Optimize on-premises and cloud resource and cost

- Visualize business service resource use and cost

Start with Helix Optimize on a 30-day free trial.

Categories

- Akamas

- Analytics

- Announcements

- Arduino

- Big Data

- Capacity Management

- Cleafy

- Cloud

- Conferences

- ContentWise

- Corporate

- Cybersecurity

- Data Science

- Digital Optimization

- Digital Performance Management

- Fashion

- IoT

- IT Governance and Strategy

- IT Operations Management

- Life at Moviri

- Machine Learning

- Moviri

- News

- Operational Intelligence

- Partners

- Performance Engineering

- Performance Optimization

- Tech Tips

- Virtualization

Stay up to date

© 2022 Moviri S.p.A.

Via Schiaffino 11

20158 Milano, Italy

P. IVA IT13187610152