18 Oct

The benefits of AI personalization for your fashion e-commerce

For a fashion brand to stand out in the crowd, it becomes essential to truly understand customers’ preferences and grant them a personalized experience during the entire purchase journey.

28 Sep

Moviri joins Arduino Pro System Integrators Partnership Program

Thanks to its close partnership with Arduino, Moviri can deploy unique capabilities across the entire industrial IoT stack, from devices to the cloud.

13 Sep

Akamas releases live optimization for Kubernetes applications

Akamas new product release enhances its AI-powered optimization platform with new key capabilities to continuously optimize production environments.

01 Sep

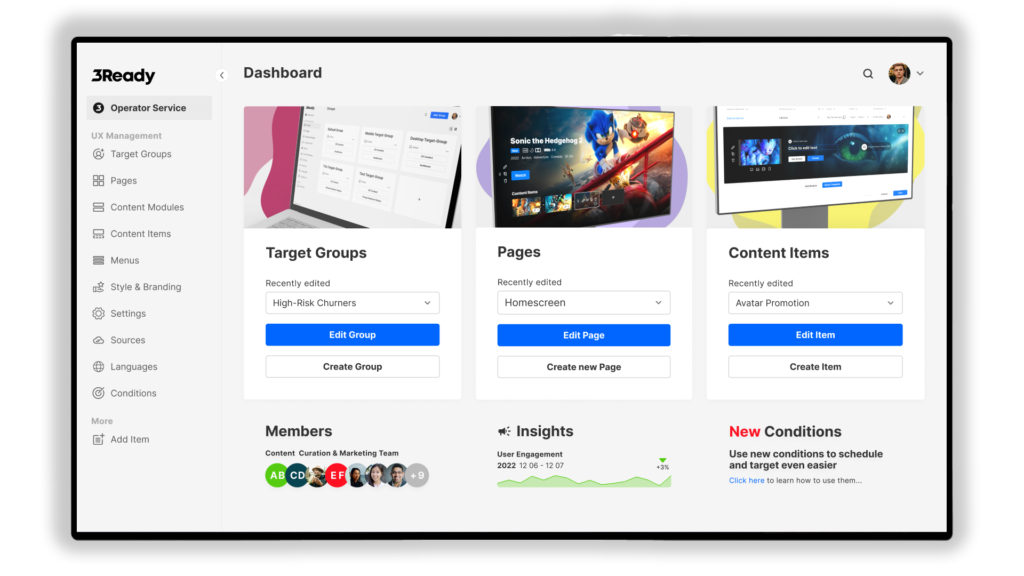

Allente at the vanguard of next-gen entertainment UX with 3SS and ContentWise

3 Screen Solutions and ContentWise announce that Nordic pay-TV giant Allente has become the first operator to deploy 3Ready Control Center 3.0

11 Aug

Akamas joins AWS Partner Network

Akamas’ AI-powered performance optimization helps AWS cloud customers consistently achieve higher performance at the lowest possible cost.

08 Jun

Ready to transform the enterprise world? We are!

Exciting news about how Arduino is forging new partnerships with Bosch, Renesas, Anzu Partners, and Arm that will take things to new levels.

02 Jun

How to improve the quality of e-commerce catalogs and reduce product returns

Tips for a high-converting digital product catalog. Improve search engine rankings and on-site product discovery experience. Forecast customer trends.

31 May

KubeCon Europe 2022 – all about Kubernetes, autoscalers, and performance optimization

Autoscalers can’t always find the best cost-performance configuration tradeoff. We presented our Kubernetes performance optimization tuning solution at KubeCon Europe 2022.

17 May

ContentWise welcomes 3SS as a new partner

3SS and ContentWise have signed a partnership to unleash the power of AI for personalized user experiences.

09 May

Akamas and Gremlin announce a new partnership

Akamas and Gremlin signed a new partnership to create a combined Chaos Engineering and Autonomous Optimization practice.